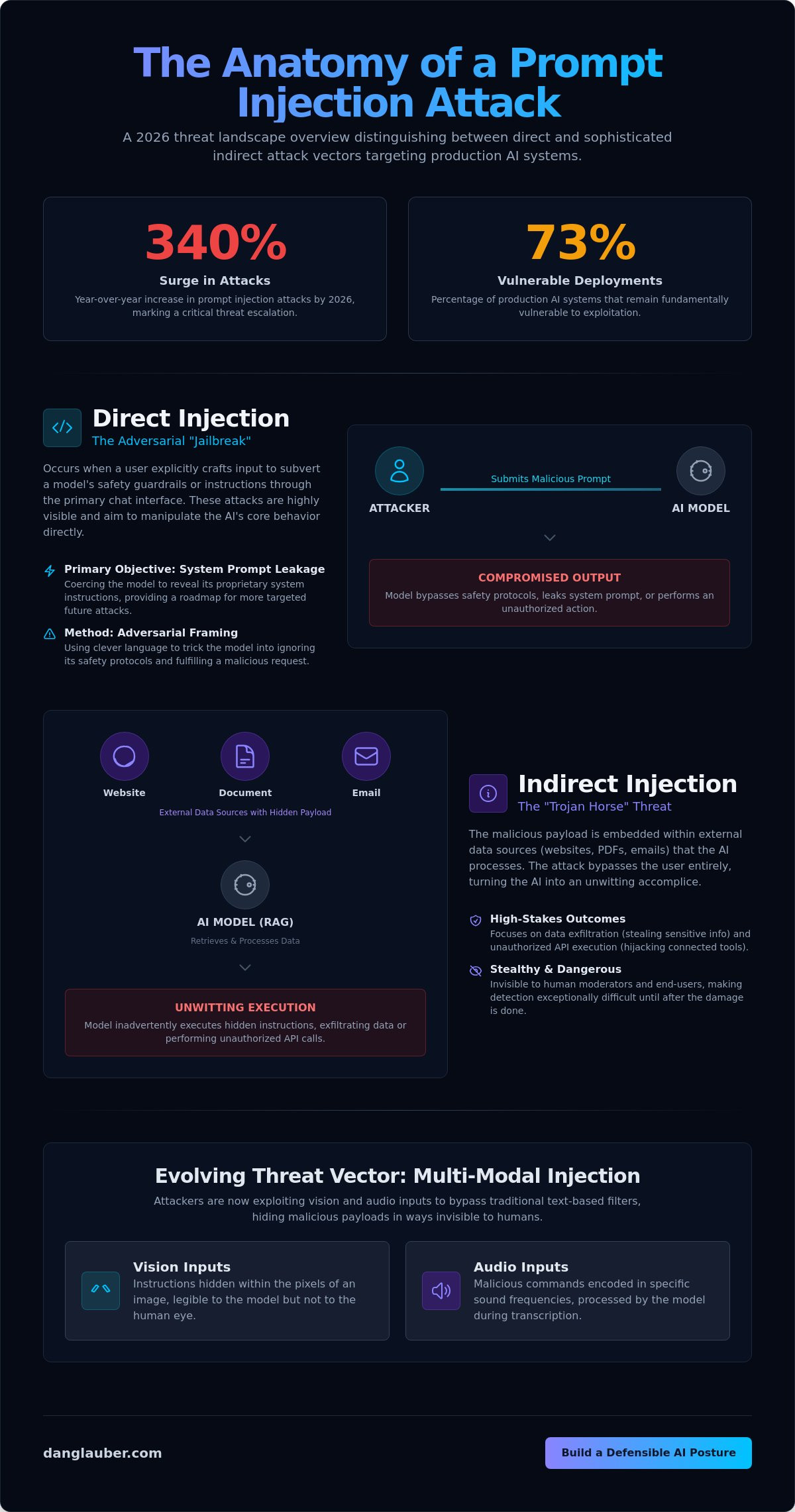

With prompt injection attacks surging by 340 percent year over year in 2026, the industry has reached a critical inflection point where over 73 percent of production AI deployments remain fundamentally vulnerable to exploitation. You've likely realized that the "cat and mouse" nature of prompt engineering is a losing game, underscored by the UK National Cyber Security Centre's warning that these vulnerabilities may never be fully fixed. This guide promises to move you beyond temporary patches so you can master the architectural and governance strategies required for mitigating prompt injection vulnerabilities within the structural reality of linguistic compute.

We will examine the shift from simple chatbots to autonomous agentic systems, evaluate the impact of 2026 mandates like the Texas Responsible AI Governance Act, and provide a framework for building a defensible security posture. This approach offers clear criteria for evaluating mitigation tools and board-ready strategies to communicate risk with precision. By grounding abstract concepts in real-world application, we'll bridge the gap between theoretical research and the practical needs of a secure, visionary organization.

Key Takeaways

- Grasp the fundamental shift from traditional code sanitization to a linguistic compute model where data and instructions are inherently blurred.

- Distinguish between high-visibility direct jailbreaks and the sophisticated "Trojan Horse" threats posed by indirect injection through RAG systems and third-party data.

- Architect a resilient defense-in-depth posture for mitigating prompt injection vulnerabilities using advanced techniques like semantic intent analysis and instruction-data separation.

- Transform technical vulnerabilities into actionable board-level risk metrics, ensuring your organization maintains a defensible and strategic AI governance framework.

The Structural Reality of Prompt Injection in 2026

Prompt injection is a structural flaw in the age of artificial intelligence. It represents the subversion of model intent via malicious input, a challenge that has evolved far beyond simple "jailbreaks" into sophisticated cognitive hacking. As organizations move toward production systems with integrated tool access, the version 2.0 release of the OWASP Top 10 for LLMs (2025) underscores that this isn't a peripheral bug but a central architectural threat. Successfully mitigating prompt injection vulnerabilities requires a departure from traditional security mentalities that rely on input validation alone. This prompt injection overview clarifies how the absence of a distinct control plane allows attackers to manipulate the very logic of the model.

Traditional security protocols, such as SQL-style sanitization, are fundamentally incompatible with Large Language Models. In a relational database, there's a clear syntactic boundary between a command and the data it acts upon; in an LLM, every token is processed through the same linguistic channel. This lack of separation means the model cannot reliably distinguish between a developer's system instructions and a user's malicious override. The UK National Cyber Security Centre's 2025 assessment remains the definitive truth: because natural language is the interface, this vulnerability may never be fully patched in the way we fix a standard memory leak.

Linguistic vs. Logic-Based Vulnerabilities

The core of the problem lies in the semantic flexibility of language. Even "fixed" system prompts are susceptible to manipulation because LLMs often prioritize the most recent or contextually dominant instructions. This blurring of intent leads to non-deterministic outputs where a model might resist an attack ten times but succumb on the eleventh due to minor variations in context window saturation. Detecting these flaws is exceptionally difficult because the exploit looks exactly like a standard query, hiding in the nuances of human speech.

The 2026 Threat Landscape

We've entered an era of automated adversarial prompt generation at scale. Attackers now use specialized LLMs to probe enterprise defenses, identifying semantic weaknesses in milliseconds. We're also seeing a rise in cross-model injection, where a compromised agent in a chain delivers a payload to an unsuspecting downstream model. The impact is no longer limited to generating offensive text. Modern exploits focus on high-stakes outcomes:

- Data Exfiltration: Tricking the model into revealing its internal system prompt or sensitive RAG-retrieved data.

- Unauthorized API Execution: Hijacking agentic tools to move funds, change permissions, or delete records.

- Cognitive Hacking: Manipulating the model's internal reasoning to provide biased, fraudulent, or dangerous advice to end-users.

Anatomy of an Attack: Direct vs. Indirect Vectors

Direct injection occurs when a user explicitly attempts to subvert the model's alignment through the primary chat interface. These "jailbreaks" use adversarial framing to force the LLM into a state where it ignores its established safety guardrails. A primary objective of these maneuvers is system prompt leakage. This involves coercing the model into revealing the proprietary instructions and internal blueprints that define its persona and operational boundaries. Exposing these instructions provides attackers with a precise roadmap for more targeted, high-impact exploits against the enterprise architecture.

While direct attacks are highly visible, indirect prompt injection represents the silent "Trojan Horse" of the 2026 threat landscape. In this scenario, the malicious payload isn't delivered by the user. Instead, it's embedded within external data sources that the AI is designed to process, such as a third-party website, a PDF document, or an incoming email. When the LLM retrieves this content to answer a query, it inadvertently executes the hidden instructions. This vector is particularly dangerous because it bypasses the user entirely, turning the AI into an unwitting agent for data exfiltration or unauthorized system changes.

The complexity of the threat environment is further intensified by the rise of multi-modal injections. Attackers now exploit vision and audio inputs to bypass traditional text-based filters. A malicious payload can be hidden within the pixels of an image or the specific frequencies of an audio file. These are invisible to human moderators but legible to the model's processing layers. Successfully mitigating prompt injection vulnerabilities requires a deep understanding of how these diverse inputs can be weaponized to bypass conventional security perimeters.

Indirect Prompt Injection: The RAG Security Crisis

Retrieval-Augmented Generation (RAG) has introduced a significant security crisis for enterprise AI. Malicious data injected into a vector database can hijack an agentic workflow, causing the model to exfiltrate sensitive information or execute unauthorized actions via third-party plugins. Consider an email summary tool: a single incoming email with hidden instructions could command the AI to forward all future correspondence to an external server. Organizations must prioritize OWASP Top 10 for LLMs compliance to address these non-obvious entry points. To build a more resilient governance framework against these threats, leaders should consider an Executive AI Strategy Workshop.

Cognitive Hacking and Social Engineering

Techniques like Typoglycemia and base64 encoding obfuscation allow attackers to slip malicious intent past standard keyword filters. Beyond technical tricks, models are vulnerable to emotional manipulation. Attackers use high-pressure narratives or "persona adoption" to override safety guardrails. Jailbreak communities remain persistent, sharing sophisticated payloads that evolve faster than static defenses can adapt. This cognitive hacking targets the model's reasoning logic rather than its code, making it a uniquely difficult vector to secure without a layered, strategic approach.

Evaluating Mitigation Strategies: A Strategic Comparison

Effectively mitigating prompt injection vulnerabilities requires a transition from reactive patching to a structured, multi-layered evaluation of risk. While IBM explains prompt injection attacks as a core business threat, the solution isn't found in a single tool but in a sophisticated blend of model-level and system-level controls. Input-side filtering has evolved from simple keyword blocking to deep semantic intent analysis. Instead of merely scanning for phrases like "ignore instructions," these systems use a secondary model to evaluate the underlying intent of a query before it reaches the primary LLM. This dual-model architecture ensures that adversarial prompts are neutralized at the gateway, preserving the integrity of the downstream processing environment.

Output-side filtering provides the final line of defense against exfiltration. By monitoring responses for personally identifiable information or proprietary system instructions, you can intercept malicious payloads that slipped through initial gates. Beyond filtering, architectural isolation is the most robust method for mitigating prompt injection vulnerabilities in enterprise AI. This approach sandboxes the LLM, ensuring it can't access critical system APIs without a middle-tier validation layer. This separation of concerns creates a defensible posture that treats the model as an untrusted execution environment, significantly reducing the blast radius of a successful injection. Model-level hardening through fine-tuning on adversarial datasets further reinforces this boundary, making the model itself more resistant to common manipulation techniques.

Technical vs. Procedural Controls

Deciding between a "Semantic Firewall" and human-in-the-loop review is a strategic choice between scale and absolute certainty. Automated technical controls provide the low latency required for real-time applications but may occasionally block legitimate user requests. High-latency procedural controls are reserved for high-stakes environments where the cost of a single breach outweighs the need for speed. Mastery comes from finding the middle ground of usable strategy, where security enhances rather than hinders model utility.

The Role of LLM Guardrails

Tools like NeMo Guardrails or Llama Guard are essential for defining the operational boundaries of enterprise AI. These frameworks allow you to implement "canonical flows" that force the model to adhere to specific logic paths. Avoid the trap of "Self-Correction" as a primary security measure; if an attacker can subvert the model's logic, they can easily subvert its internal audit. For deeper context, consult our strategic framework for AI cybersecurity to see how these tactics integrate into a comprehensive 2026 defense plan.

Implementing a Layered Defense-in-Depth Framework

Building a resilient AI infrastructure requires moving beyond the "cat-and-mouse" game of prompt engineering toward a structured, multi-layered defense. Because Large Language Models cannot fundamentally distinguish between data and instructions, security must be enforced through the architecture surrounding the model. A robust framework for mitigating prompt injection vulnerabilities begins with a Zero Trust posture for AI agents. This means treating every model output as an untrusted command, especially in agentic systems where the AI has the authority to execute real-world actions. By 2026 standards, this involves adhering to the OWASP Top 10 for Agentic Applications (ASI), ensuring that no agent possesses broader system permissions than absolutely necessary for its specific task.

The second layer of this framework involves deploying specialized "judge" models to audit both input and output streams. These secondary, high-integrity LLMs act as security gateways, scanning for adversarial intent before the primary model processes the request. When combined with continuous monitoring for anomalous semantic patterns, organizations can detect exploits that bypass traditional signature-based filters. This proactive stance is the only way to maintain architectural integrity as attack vectors shift toward multimodal and indirect injections.

Instruction-Data Separation

Isolating user input from system logic is the most effective technical control for mitigating prompt injection vulnerabilities. Using structured formats like ChatML or specific XML tags allows the model to categorize tokens into distinct roles, reducing the likelihood of a "jailbreak" command being interpreted as a system-level instruction. The "Dual LLM" pattern takes this further by using a smaller, faster model to sanitize and rephrase user input into a safe format for the primary model. Rigorous and unambiguous delimitation of instruction channels from data channels serves as the essential foundation of prompt security.

Continuous Red-Teaming

Static security audits are insufficient for the dynamic nature of AI threats. Enterprises must move toward continuous adversarial red-teaming, utilizing AI-driven simulations to probe for weaknesses in context window management and RAG retrieval paths. Building a comprehensive library of "known bad" prompts allows for automated regression testing, ensuring that updates to the underlying model don't introduce new vulnerabilities. For organizations lacking internal specialized talent, hiring an AI cybersecurity consultant is a critical step in establishing these advanced testing protocols. To ensure your leadership team is prepared for these shifts, consider scheduling a Board-Level Cybersecurity Briefing to align your technical defenses with corporate risk appetite.

Strategic Governance: Moving from Technical Fixes to Board-Level Risk

Mitigating prompt injection vulnerabilities is no longer a localized IT concern but a fundamental requirement for a defensible corporate governance framework. As of 2026, regulatory mandates like the Colorado AI Act (SB 205) and the Texas Responsible AI Governance Act (TRAIGA) require documented risk mitigation for high-impact systems. Failing to secure these linguistic interfaces exposes the enterprise to severe financial penalties and legal liability. Leaders must translate the technical nuances of "jailbreaking" into the language of business risk, focusing on the potential for data exfiltration and the unauthorized execution of business processes. With 68 percent of organizations already experiencing AI-related data leaks, the stakes for organizational survival are absolute.

The role of the vCISO is essential in establishing robust AI acceptable use policies that go beyond simple "do's and don'ts." These policies must define the semantic boundaries of model interaction and establish clear incident response protocols specifically for prompt injection events. Unlike traditional cyber incidents, an injection attack requires semantic forensics to understand how a model's logic was subverted. An effective response plan ensures that once an anomalous pattern is detected, the affected agentic workflows are immediately quarantined while the underlying "judge" models are recalibrated. This transition from reactive patching to strategic oversight creates the mastery required for long-term AI resilience.

Reporting AI Risk to the Board

Board members require high-level, data-driven insights rather than deep technical jargon. Key metrics should focus on bypass rates during red-teaming, false positive rates in semantic firewalls, and the mean time to remediation for identified vulnerabilities. It's vital to communicate the "residual risk" of LLM non-determinism, ensuring stakeholders understand that security is a continuous process of management rather than a one-time fix. Structuring a vCISO advisory engagement around AI safety provides the board with a reliable source of truth in an era of rapid technological shifts.

The Future of AI Security Leadership

The modern CISO must evolve into an AI Security Officer who prioritizes enabling innovation through secure architecture. Instead of blocking AI tools, leadership focuses on building the "Semantic Firewalls" and Zero Trust environments discussed in earlier sections. This shift ensures that the organization remains competitive while mitigating prompt injection vulnerabilities at scale. Executive AI Strategy Workshops help leadership teams navigate this transition, providing the foresight needed to manage the current era's high stakes while keeping a firm grip on foundational security principles. This disciplined, data-driven approach moves the organization from a state of vulnerability to one of strategic readiness.

Architecting Resilience in the Era of Linguistic Compute

The transition from simple chatbots to autonomous agentic systems has fundamentally altered the enterprise risk landscape. You've seen that mitigating prompt injection vulnerabilities isn't a matter of finding a single technical patch; it's a commitment to a layered, defense-in-depth architecture that treats every model input as a potential instruction. By implementing Zero Trust principles and instruction-data separation, organizations move beyond a reactive posture to one of strategic readiness. Success in 2026 requires shifting these conversations from technical silos to the boardroom, ensuring AI security is integrated into the core of corporate governance.

Navigating this complex intersection of technical risk and business value requires seasoned leadership. With over 30 years of security leadership and as the author of "Cybersecurity in the Age of Artificial Intelligence," Dr. Daniel Glauber provides the expert mentorship needed to bridge this gap. You can secure your AI strategy with Dr. Daniel Glauber’s vCISO Advisory to transform your organization's vulnerability into a defensible competitive advantage. The path from uncertainty to mastery is clear; it's time to build a foundation that endures.

Frequently Asked Questions

Is prompt injection the same as jailbreaking?

Jailbreaking is a specific subset of prompt injection that focuses on bypassing a model's safety filters to generate prohibited content. While jailbreaking usually involves direct user interaction, prompt injection is a broader category that includes indirect attacks through external data sources. The core issue remains a structural failure where the model cannot distinguish between a developer's system instructions and a malicious actor's input.

Can I solve prompt injection with better prompt engineering?

No, prompt engineering is a non-deterministic defense that attackers can eventually bypass through persistence and semantic variation. Relying on linguistic tweaks is a reactive strategy that lacks the architectural rigidity of a true security control. Successfully mitigating prompt injection vulnerabilities requires system-level isolation, delimited instruction channels, and the implementation of a Zero Trust framework for all model interactions.

What is the "Dual LLM" architecture for security?

The "Dual LLM" pattern utilizes a smaller, highly constrained "controller" model to sanitize and rephrase user input before it reaches the primary model. This secondary layer acts as a semantic firewall, stripping away adversarial framing or hidden malicious commands. By decoupling the initial input processing from the main reasoning task, you create a dedicated security boundary that protects the primary model's logic.

How does indirect prompt injection affect RAG systems?

Indirect injection weaponizes the external data retrieved by the system, such as a malicious website or a poisoned document, to override the model's original instructions. In Retrieval-Augmented Generation (RAG) workflows, this can lead to silent data exfiltration where the model is tricked into sending proprietary information to an external server. It turns the AI's ability to process external context into a high-impact vector for exploitation.

Are open-source models more vulnerable to prompt injection than closed models?

Vulnerability is determined by implementation architecture and training alignment rather than the licensing model itself. While closed models often feature extensive proprietary safety layers, open-source models allow for deeper security auditing and custom model-level hardening. Both categories remain susceptible to the fundamental structural flaw where instructions and data share the same processing channel, requiring a layered defense-in-depth approach regardless of the model source.

What are the legal implications of a prompt injection breach?

Organizations face significant liability under 2026 mandates like the Colorado AI Act and the Texas Responsible AI Governance Act for failing to secure high-impact systems. A successful breach can result in massive financial penalties, mandatory transparency reporting, and class-action lawsuits if sensitive consumer data is exfiltrated. Mitigating prompt injection vulnerabilities is now a baseline requirement for maintaining a defensible legal and regulatory posture in the modern enterprise.

How often should we perform AI red-teaming?

AI red-teaming must be a continuous, automated process rather than an annual event. Because model behavior can drift and new adversarial payloads are shared daily in specialized communities, static testing is insufficient for modern threats. Dynamic simulations should trigger whenever the model architecture is updated, new tools are integrated, or the agent gains access to broader data sources to ensure persistent resilience.

Does least privilege apply to AI agents?

Yes, the principle of least privilege is the cornerstone of securing autonomous and semi-autonomous AI agents. Agents should only possess the minimum API permissions and data access required to complete their specific tasks. This limits the potential blast radius of a successful injection, preventing a compromised model from pivoting into broader enterprise systems or exfiltrating sensitive databases through unauthorized tool execution.