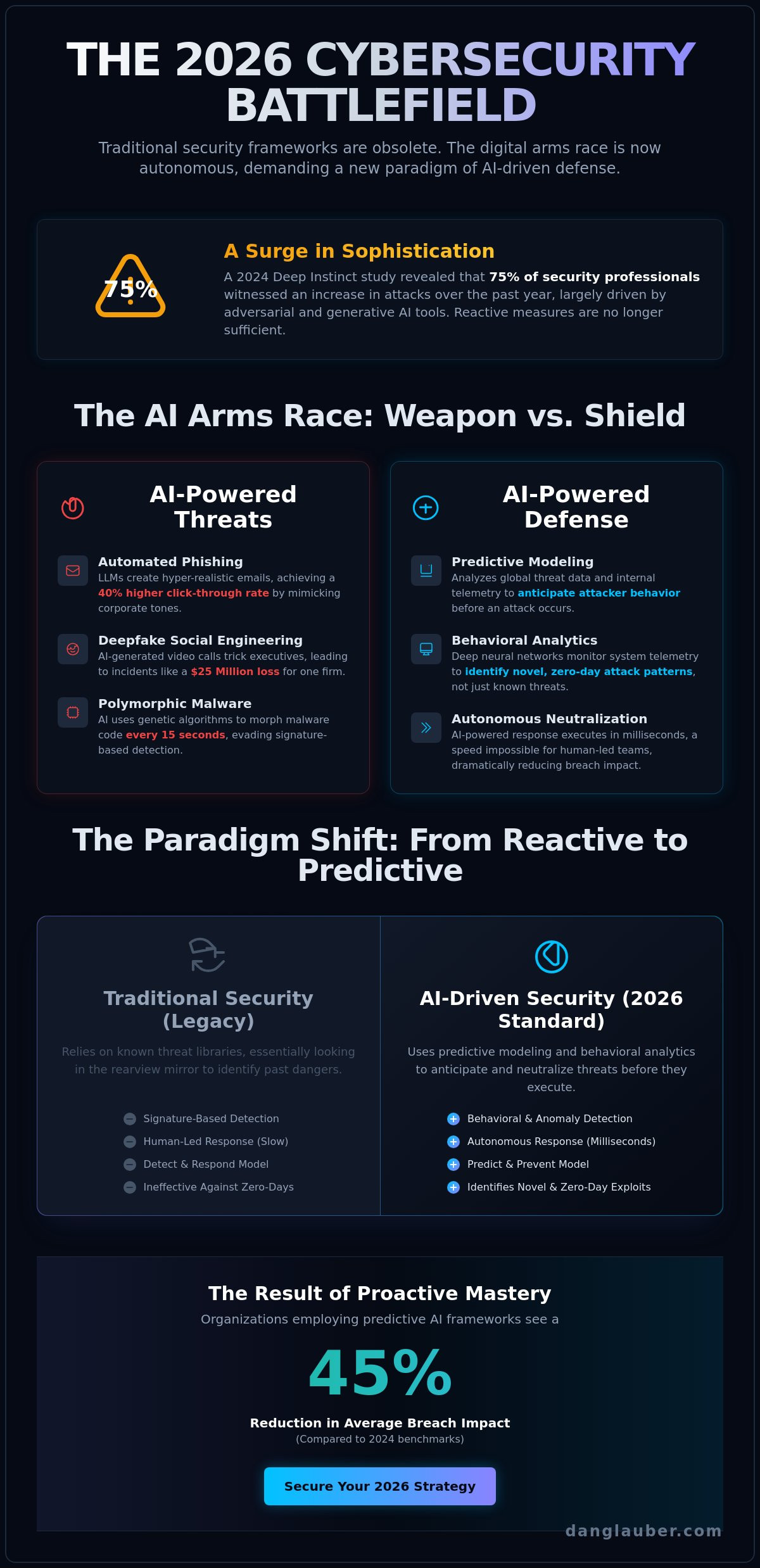

The security frameworks your organization relies on today will be obsolete by 2026 as adversarial machine learning transforms the digital battlefield into an automated arms race. You're likely exhausted by the relentless cycle of marketing hype, wondering if artificial intelligence in cybersecurity offers a genuine defense or merely adds another layer of expensive complexity. It's a valid concern; a 2024 study by Deep Instinct revealed that 75% of security professionals witnessed an increase in attacks over the past 12 months, largely driven by generative tools. You deserve a strategy that moves beyond reactive patching and into the realm of proactive mastery.

This guide empowers you to command the intersection of AI and cybersecurity with precision. We'll strip away the ambiguity to provide a definitive roadmap for navigating next-generation threats like deepfake injection and automated phishing. You'll gain access to actionable frameworks designed for real-world implementation and a clear understanding of the AI security domains that matter most. We'll explore how to secure your neural networks and optimize your ROI, ensuring your team is strategically prepared for the sophisticated challenges of 2026.

Key Takeaways

- Master the transition from traditional signature-based detection to advanced neural networks designed to predict and neutralize sophisticated 2026-era threats.

- Understand the dual-use nature of the digital battlefield to defend against the democratization of high-impact, low-cost AI tools utilized by modern adversaries.

- Apply strategic frameworks for artificial intelligence in cybersecurity that prioritize board-level oversight and organizational mastery of emerging attack vectors.

- Evaluate the critical risks of adversarial AI and structural model limitations to ensure your defensive posture remains resilient against autonomous exploitation.

- Position your organization for the 2026 executive outlook by bridging the gap between theoretical AI concepts and actionable, real-world security applications.

Defining the Intersection of AI and Cybersecurity in 2026

By 2026, the application of artificial intelligence in cybersecurity has transitioned from a competitive advantage to a fundamental organizational necessity. This intersection represents the strategic deployment of machine learning and deep neural networks to autonomously predict and neutralize adversarial threats. We're no longer defending static perimeters; we're operating on a digital battlefield where speed and scale are the primary variables determining survival. For a technical foundation on these deployments, the Applications of AI in Cybersecurity offers a comprehensive overview of how these technologies integrate into modern frameworks.

The distinction between legacy systems and 2026 standards is absolute. Traditional signature-based detection relies on a library of known threats, essentially looking in the rearview mirror to identify danger. In contrast, modern AI-driven behavioral analytics monitor system telemetry for anomalies that deviate from established norms. This allows security teams to identify novel attack patterns that haven't been documented in any database. 2026 marks the tipping point where automated security operations become the baseline. Human-led response times are simply insufficient against AI-powered exploits that execute in milliseconds.

From Machine Learning to Deep Neural Networks

The evolution of artificial intelligence in cybersecurity has moved rapidly from basic machine learning pattern recognition to the use of complex deep neural networks. While early ML models required structured data to identify simple anomalies, modern neural networks mimic human decision-making to identify zero-day vulnerabilities. These models process vast quantities of unstructured data to find correlations that escape human observation. The success of these systems relies on data quality; high-fidelity, diverse datasets are the fuel that allows neural networks to reach the precision required for autonomous defense. Without rigorous data curation, even the most advanced models will produce false positives that paralyze security operations.

The Shift from Reactive to Predictive Defense

The historical "detect and respond" model is obsolete in an era of automated warfare. Organizations have pivoted toward "predict and prevent" strategies that use predictive modeling to anticipate attacker behavior before the first packet is sent. By analyzing global threat intelligence and internal telemetry, these systems identify the precursors of an attack, such as reconnaissance activity or credential harvesting attempts. Data from early 2026 indicates that companies employing these predictive frameworks have reduced their average breach impact by 45 percent compared to 2024 benchmarks. AI-driven predictive defense is the cornerstone of 2026 security.

The Digital Battlefield: AI as Both Threat and Defense Strategy

We are witnessing a definitive shift in the digital battlefield. The dual-use nature of artificial intelligence in cybersecurity means the same neural networks optimizing enterprise defense are also empowering sophisticated threat actors. This is a technological arms race where the democratization of cybercrime has lowered the barrier to entry. High-impact tools that once required nation-state resources are now accessible for minimal costs on dark web forums. Organizations must reject the false dichotomy of innovation versus security; in this era, security is the primary engine of sustainable innovation. Success requires a strategic mastery of these tools to maintain a proactive posture against evolving attack vectors.

How AI Enables Next-Generation Cyber Attacks

Automated phishing campaigns now achieve 40% higher click-through rates by using large language models to mimic specific corporate tones with surgical precision. Deepfake social engineering has evolved beyond simple audio clips; a 2024 incident resulted in a $25 million loss for a multinational firm after a video-call scam featured AI-generated representations of company executives. Adversarial AI can now analyze Multi-Factor Authentication (MFA) timing patterns to execute bypass maneuvers that trick traditional verification systems. Additionally, AI-generated malware uses genetic algorithms to morph its code structure every 15 seconds, allowing it to evade detection by signature-based security software that relies on static databases.

AI-Driven Defensive Countermeasures

Mastery of the digital environment requires integrating AI into Security Information and Event Management (SIEM) platforms to reduce false positives by 75% through continuous behavioral baselining. Security teams use Security Orchestration, Automation, and Response (SOAR) to automate incident response, which has been shown to cut mean time to remediation (MTTR) from hours to seconds. These CISA AI Cybersecurity Guidance protocols provide the essential groundwork for building resilient infrastructures. A critical component of this defense involves enhancing Zero-Trust Architecture, where artificial intelligence in cybersecurity verifies every interaction based on 200+ risk signals in real-time. This granular level of verification ensures that identity becomes the new perimeter.

To build a more resilient organizational posture, you might consider exploring my latest actionable frameworks designed for executive leadership. Moving from a state of vulnerability to one of strategic readiness is the only way to navigate the intersection of AI and security effectively.

Strategic Frameworks for Implementing AI-Driven Security

Success on the digital battlefield requires more than just advanced software; it demands a structured methodology for organizational readiness. Dr. Glauber's frameworks provide a definitive roadmap for leaders to transition from reactive defense to proactive mastery. Security is no longer a localized IT concern. It requires board-level oversight to ensure that artificial intelligence in cybersecurity aligns with core business objectives and risk tolerances. A vCISO often serves as the critical bridge in this transformation, translating technical neural network capabilities into strategic executive decisions that protect the bottom line.

Step 1: Assessing the AI Risk Landscape

Organizations must begin with a rigorous audit of existing vulnerabilities. This process identifies high-value assets, such as proprietary data sets and customer PII, that require immediate AI-driven protection. Establishing a baseline for current detection and response times is vital for measuring future ROI. In October 2024, reports from the DHS on Leveraging AI for Cybersecurity emphasized that strategic exploration of AI is essential for enhancing national resilience and supply chain oversight. Leaders should evaluate how adversarial AI might exploit current gaps before deploying defensive countermeasures. This audit acts as the foundation for a resilient security posture heading into 2026.

Step 2: Selecting the Right AI Security Partners

Vendor selection is a high-stakes decision that dictates long-term technical maturity. When choosing the right AI security company, transparency is the primary metric. You need to know how their models are trained and whether they utilize secure, non-biased data sets. Scalability is equally critical for mid-to-large organizations. A solution that functions in a sandbox but fails under the weight of 50,000 daily alerts isn't a viable defense strategy. Demand proof of performance in real-world environments and ensure the partner provides a clear roadmap for model updates as attack vectors evolve.

Step 3: Human-in-the-Loop Governance

AI shouldn't replace your security professionals; it should augment their capabilities. The Human-in-the-Loop (HITL) model ensures that people remain the final authority for high-stakes security decisions. This governance structure prevents automated errors from cascading into systemic failures. Organizations must develop a training roadmap to upskill their existing team. Mastery of artificial intelligence in cybersecurity isn't about learning to code, but learning to orchestrate automated systems. This approach transforms analysts into strategic hunters who use AI to amplify their expertise. By 2026, the most secure organizations will be those that strike a perfect balance between machine speed and human intuition.

Navigating the Risks: Adversarial AI and Structural Limitations

The most frequent objection from C-suite executives is whether it's truly safe to let an algorithm run the defense of an entire enterprise. This skepticism is healthy. Entrusting autonomous systems with the "kill switch" for a corporate network introduces a new class of strategic vulnerability. AI models don't possess human intuition; they're mathematical constructs vulnerable to the same logic-based exploitation as any other software. Relying on artificial intelligence in cybersecurity as a silver bullet is a dangerous myth that ignores the necessity of human oversight. The idea that AI eliminates all risk is a fallacy that leads to organizational complacency.

Adversarial AI and Model Poisoning

Threat actors are already weaponizing the digital battlefield by targeting the AI models themselves. Through model poisoning, attackers inject malicious data into a training set to create specific "blind spots" in the system's logic. If an attacker can influence 3% of the training data, they can often bypass detection entirely. Evasion techniques are equally lethal; attackers subtly modify file signatures or network traffic to fool neural networks into misclassifying a threat as harmless. AI security is only as strong as its training integrity.

The Challenge of AI Hallucinations in Security

Hallucinations aren't just a quirk of generative text; they're a critical failure point for defensive models. When an AI becomes over-sensitive, it generates a cascade of false positives that can paralyze a Security Operations Center (SOC). A 2024 industry report found that 60% of security analysts struggle with alert fatigue, often caused by "hallucinated" threats that don't exist in reality. This operational friction creates a window of opportunity for real attackers to slip through the noise. Continuous model validation and rigorous monitoring are the only ways to ensure that artificial intelligence in cybersecurity remains an asset rather than a liability.

Ethical implications also loom large over automated decision-making. When an AI blocks a critical business process during a suspected breach, the resulting downtime can cost an organization thousands of dollars per minute. Accountability can't be outsourced to a machine. Strategic mastery requires a framework where AI provides the intelligence, but human experts retain the authority to execute the final countermeasures. This balance ensures that the speed of AI is tempered by the judgment of experienced practitioners.

Mastery and Readiness: The 2026 Executive Outlook

The digital battlefield of 2026 demands a departure from reactive defense. The intersection of AI and cybersecurity is no longer a peripheral concern; it's the core operational reality for every global enterprise. By Q3 2026, industry data suggests that 82% of sophisticated attacks will utilize adversarial machine learning to bypass traditional perimeter defenses. Leaders who remain stuck in technical anxiety risk obsolescence. Success requires a transition to strategic mastery, where AI isn't just a tool but a foundational element of the security architecture. Organizations must move beyond tentative pilot programs. Achieving full-scale AI integration is the only way to match the speed and scale of modern attack vectors.

Dr. Daniel Glauber’s groundbreaking work, "Cybersecurity in the Age of Artificial Intelligence," serves as the definitive guide for this transition. Through 18 comprehensive chapters and 50+ real-world case studies, the book provides the actionable frameworks necessary to build resilient systems. It bridges the gap between theoretical neural networks and practical, high-stakes defense strategies. By anchoring your strategy in these proven principles, you transform artificial intelligence in cybersecurity from a source of uncertainty into a pillar of organizational strength.

Future-Proofing Your Security Posture

The latter half of 2026 will see the rise of autonomous defensive agents capable of sub-millisecond response times. To stay ahead, organizations must cultivate a resilient culture that views technological shifts as opportunities rather than threats. This cultural evolution is sustained through regular executive workshops. These sessions ensure that the C-suite maintains clarity on the AI threat curve, transforming artificial intelligence in cybersecurity from a technical hurdle into a competitive advantage. Prioritizing decentralized identity management and self-healing networks will be critical benchmarks for success in 2027.

Taking the Next Step with Dr. Daniel Glauber

Navigating this complex landscape requires personalized strategic leadership. Dr. Glauber offers vCISO advisory services to help organizations implement bespoke strategic frameworks that align with specific risk profiles. For boards and executive teams requiring high-level clarity, his keynote speaking engagements provide a visionary roadmap through the digital battlefield. To begin your journey toward mastery, secure your copy of the foundational book through the official portal today. This is the essential first step for any leader committed to securing their organization’s future in the age of AI.

Mastering the 2026 Digital Battlefield

The landscape of 2026 demands a shift from reactive defense to proactive strategic mastery. This evolution proves that the intersection of artificial intelligence in cybersecurity isn't just a technical upgrade; it's a fundamental restructuring of how we protect critical assets. Organizations must implement actionable frameworks that address both the defensive potential of neural networks and the rising threat of adversarial AI. Success requires a dual-perspective approach that balances zero-trust architecture with the speed of automated response systems to neutralize sophisticated attack vectors.

Realizing this level of strategic readiness requires more than just foresight. It demands the depth of knowledge found in 50+ real-world case studies and frameworks currently utilized by mid-to-large organizations across the globe. You can bridge the gap between abstract theory and practical application by leveraging the insights of Dr. Daniel Glauber, a 30-year technology veteran. It's time to transform your security posture into a resilient, AI-driven advantage that commands respect in an evolving era.

Master the digital battlefield, order "Cybersecurity in the Age of Artificial Intelligence" today.

Frequently Asked Questions

Is artificial intelligence replacing cybersecurity professionals in 2026?

No, AI isn't replacing human professionals; it's serving as a force multiplier on the digital battlefield. By 2026, the global cybersecurity workforce gap is projected to reach 4 million unfilled positions according to ISC2. AI fills this void by automating 70% of Tier 1 SOC alerts. This shift allows human analysts to focus on high-level strategic reasoning and complex incident response that neural networks can't yet replicate.

What is the biggest risk of using AI in cybersecurity today?

The most critical risk is data poisoning, where attackers inject malicious data into training sets to manipulate model behavior. In 2025, security researchers identified that even a 3% corruption of training data can degrade a model's detection accuracy by nearly 50%. This creates blind spots in your defense perimeter. Organizations must implement rigorous data hygiene and validation protocols to prevent these subtle, long-term compromises of their primary defense logic.

How does generative AI impact social engineering and phishing attacks?

Generative AI has increased the volume and sophistication of phishing by 1,265% since late 2022. It enables attackers to launch hyper-personalized campaigns in 15 different languages simultaneously without grammatical errors. These deepfake communications bypass traditional email filters that look for linguistic anomalies. To counter this, firms are adopting Zero-Trust Architecture to verify every identity, regardless of how convincing the AI-generated message appears.

Can small and mid-sized businesses afford AI-driven security solutions?

Yes, SMBs can access these tools through the Managed Security Service Provider (MSSP) model, which reduces initial capital expenditure by 40%. The 2024 IBM Cost of a Data Breach Report shows that organizations using AI and automation saved an average of $2.22 million compared to those that didn't. For a mid-sized firm, the return on investment comes from preventing a single $150,000 ransomware event through proactive, AI-driven threat hunting.

What is adversarial machine learning and why should CISOs care?

Adversarial machine learning involves tactics designed to trick or subvert AI models, such as evasion attacks that hide malware from scanners. CISOs must prioritize this because the MITRE ATLAS framework now lists over 30 specific techniques used to exploit machine learning vulnerabilities. If your artificial intelligence in cybersecurity isn't hardened against these tactics, your automated defenses become a liability. It's a fundamental shift in the attack surface that requires specialized countermeasures.

How can boards of directors better oversee AI security risks?

Boards should demand quantitative risk assessments that align with the SEC’s 2023 cybersecurity disclosure requirements. They need to move beyond technical jargon and focus on AI Resiliency Scores and potential financial impact per breach event. By establishing an AI Ethics and Security Committee, the board ensures that 100% of AI deployments undergo a rigorous risk-benefit analysis. This oversight transforms security from a technical hurdle into a core pillar of corporate governance.

What are the first steps to building an AI-ready security framework?

The first step is conducting a comprehensive inventory of all data assets and existing AI touchpoints within the organization. You should adopt the NIST AI Risk Management Framework 1.0 as your foundational blueprint. This involves setting clear Acceptable Use policies for generative tools and ensuring that artificial intelligence in cybersecurity is integrated with your existing Zero-Trust protocols. Establishing this baseline allows for the scalable deployment of more advanced neural networks later.

Will AI eventually take over all aspects of cyber defense?

No, AI won't take over every aspect because it lacks the creative intuition required for novel threat scenarios. While AI will manage 90% of routine monitoring and patch management by 2026, humans remain the final decision-makers for high-stakes strategic responses. The future of the digital battlefield relies on a Human-in-the-Loop model. This synergy ensures that we leverage machine speed for data processing while maintaining human accountability for ethical and complex tactical choices.