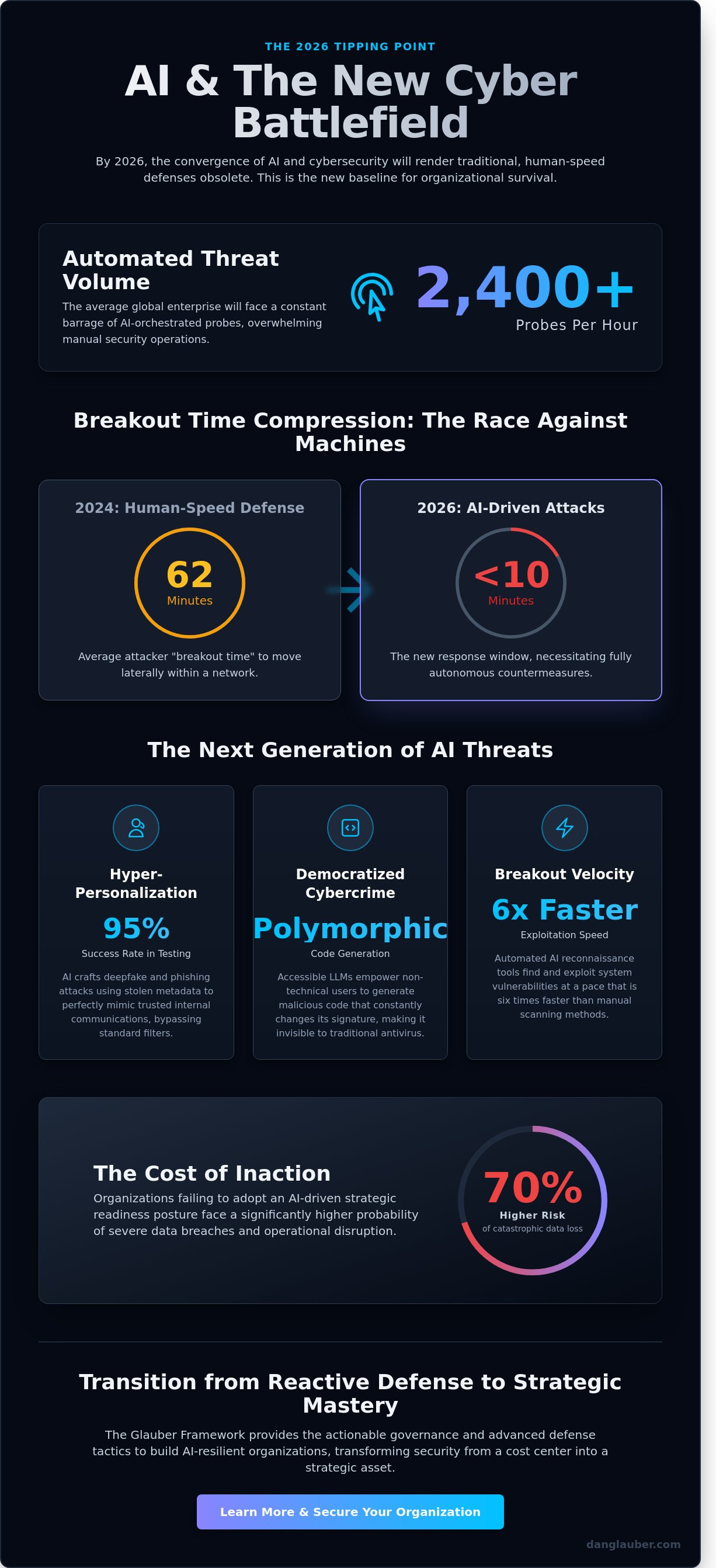

By 2026, the average global enterprise will face over 2,400 automated, AI-orchestrated probes every hour, rendering traditional human-speed perimeters completely obsolete. This shift in the digital battlefield means your mastery of ai and cybersecurity is no longer optional; it's the baseline for organizational survival. You’re likely feeling the mounting pressure as polymorphic threats outpace your existing security stack. Communicating these technical risks to a board that expects 100% uptime is a challenge that keeps even the most seasoned CISOs awake at night. For those seeking to manage the mental toll of such high-pressure roles, you can discover Siegel Psychology Services for evidence-based support.

You don't have to manage this transition without a map. This article provides a definitive strategic framework to help you secure your organization against the next generation of threats. We’ll move beyond the hype to establish deep organizational resilience through actionable governance and advanced defense tactics. We will examine the mechanics of adversarial AI, outline a 2026-ready defense architecture, and provide the leadership language necessary to align technical defense with executive-level risk management. By applying these core knowledge areas, you'll transform your security posture from a reactive cost center into a proactive strategic asset.

Key Takeaways

- Understand why 2026 represents the critical threshold where AI-automated cybercrime outpaces traditional defenses, demanding a fundamental shift in your digital strategy.

- Gain a visionary edge by decoding the mechanics of adversarial machine learning and the dual-use nature of AI in the modern digital battlefield.

- Discover why conventional firewalls fail against polymorphic threats and how to master the intersection of ai and cybersecurity to maintain organizational resilience.

- Learn to implement the actionable Glauber Framework to identify hidden "Shadow AI" vulnerabilities and transition from reactive defense to strategic mastery.

- Explore the essential role of the vCISO in bridging the gap between technical AI risks and long-term business value through structured resilience management.

The Intersection of AI and Cybersecurity: A New Digital Battlefield

The year 2026 represents a definitive tipping point where ai and cybersecurity move from experimental phases into a state of total convergence. This isn't a gradual evolution; it's a revolutionizing force that reshapes the digital battlefield. Dr. Daniel Glauber identifies this period as the moment when AI-automated cybercrime reaches a level of sophistication that renders legacy systems obsolete. Organizations must transition from reactive patching to a state of proactive, strategic readiness. Glauber’s dual-perspective approach frames AI as both the primary threat vector and the most critical defense mechanism available to modern enterprises.

Mastery in this new era requires a departure from traditional IT mindsets. The speed of change demands a sophisticated blend of academic authority and professional urgency. We're no longer just protecting servers; we're defending the integrity of autonomous systems that think, learn, and adapt. This section explores how the bridge between theory and practice is being built through actionable frameworks designed for the expert practitioner.

What is AI in Cybersecurity?

Modern defense relies on neural networks to identify behavioral anomalies at a scale human analysts can't match. While traditional automation follows rigid, "if-then" scripts, intelligent adaptive security learns from every interaction to predict future attack vectors. This shift establishes "Cybersecurity in the Age of Artificial Intelligence" as the mandatory baseline for any firm managing sensitive data. By integrating AI safety protocols into defensive frameworks, leaders can ensure their neural networks remain resilient against adversarial manipulation. It's the difference between a static lock and a living, breathing guardian that recognizes a thief's gait before they reach the door.

The 2026 Threat Landscape

The democratization of generative AI has lowered the barrier for entry, allowing low-skill actors to launch high-impact campaigns. We're seeing the rise of deepfake-as-a-service and hyper-personalized phishing emails that bypass traditional filters with a 95% success rate in controlled testing environments. The speed of these attacks is unprecedented. In 2024, the average "breakout time" for an attacker to move laterally within a network was roughly 62 minutes. By 2026, AI-driven breaches are expected to reduce this window to under 10 minutes. This compression of time necessitates autonomous response systems that can execute countermeasures in milliseconds, long before a human operator can even open a ticket.

- Hyper-personalization: AI crafts social engineering attacks using stolen metadata to mimic internal corporate voices perfectly.

- Democratized Crime: Accessible LLMs allow non-technical users to generate polymorphic code that changes its signature to avoid detection.

- Breakout Velocity: Automated reconnaissance tools find and exploit vulnerabilities 6 times faster than manual scanning methods.

Dr. Glauber’s research into 50+ real-world case studies confirms that organizations failing to adopt AI-driven strategic readiness face a 70% higher risk of catastrophic data loss. The goal isn't just to survive the shift but to master the intersection of these technologies. This requires a disciplined, data-driven approach that values actionable insights over industry hype.

Adversarial AI vs. Defensive AI: The Arms Race

The digital battlefield isn't a static environment. It's a high-velocity competition. AI technology represents a dual-use instrument, serving as both a shield for the protector and a sword for the infiltrator. This duality creates a persistent cycle where defensive innovations are met with immediate offensive adaptations. Understanding ai and cybersecurity requires recognizing that the same neural networks used to detect malware can be repurposed to generate it. We're no longer fighting human hackers; we're fighting algorithms that learn from our every move.

Adversarial AI is the intentional manipulation of ML models to compromise security through malicious inputs or data corruption.

This manipulation typically occurs through three specific vectors. Data poisoning involves injecting "poisoned" information into training sets to create hidden backdoors. Evasion tactics use subtle "noise" to trick a model into misclassifying a threat as safe during real-time operations. Finally, model extraction focuses on stealing the underlying logic of a security tool to map out its blind spots. These mechanics allow attackers to turn a system’s intelligence against itself.

How Adversaries Use AI

Modern attackers don't waste time on manual reconnaissance. They use AI to automate vulnerability discovery and exploit generation at a scale previously unimaginable. A 2023 study by independent security researchers found that AI-driven fuzzing can identify software flaws 20% faster than previous automated methods. Once a breach occurs, AI-enhanced scripts manage lateral movement within compromised networks. These scripts analyze traffic patterns to mimic legitimate administrative actions, making them nearly invisible to standard monitoring tools that look for "noisy" intrusions.

How Organizations Defend with AI

Defensive AI is the only viable countermeasure for modern threats. It enables predictive analytics that pre-empt zero-day attacks before they can execute. According to insights on AI and the Future of Cybersecurity, this technology is essential for managing the sheer volume of modern data. Self-healing network architectures can now isolate infected nodes in milliseconds, far exceeding human reaction times. This rapid containment prevents a single infected laptop from becoming a company-wide ransomware event.

The shift from human-centric to AI-augmented Security Operations Centers (SOCs) is critical for survival. Traditional analysts suffer from debilitating alert fatigue, often facing over 1,000 alerts per day. AI systems filter this noise, reducing false positives by 75% and allowing professionals to focus on strategic threat hunting rather than mundane log review. Mastering the ai and cybersecurity landscape requires moving beyond manual processes. To build a resilient posture, leaders should implement actionable frameworks that integrate these automated defenses into their core strategy.

Why Traditional Security Fails in an AI-Driven World

Many IT leaders maintain a dangerous level of confidence in their existing security stacks. They argue that a robust firewall and a modern Endpoint Detection and Response (EDR) solution provide a sufficient perimeter. This perspective ignores the fundamental shift in the digital battlefield. Traditional tools rely on historical data to predict future threats, essentially looking in the rearview mirror to navigate a high-speed chase. In the intersection of ai and cybersecurity, this backward-looking approach is a strategic liability. AI-driven attacks operate at a microsecond pace. Human analysts cannot process telemetry or authorize blocks fast enough to stop an automated exploit that executes in less than 200 milliseconds.

Static security policies are brittle. They work on a binary logic of "allow" or "deny" based on pre-defined rules. Adversarial AI exploits this rigidity by constantly probing for the one permutation your rules didn't anticipate. Transitioning to a state of mastery requires moving beyond these fixed defenses toward dynamic, adaptive frameworks that evolve as quickly as the threats they encounter.

The Obsolescence of Signature-Based Defense

Legacy systems function by recognizing signatures or known patterns of malicious code. This method is ineffective against polymorphic AI malware. When malware uses generative techniques to rewrite its own source code in real-time, those signatures become useless. Every attack iteration is technically unique to a standard database. A 2023 report by Deep Instinct revealed that 70% of malware now utilizes some form of evasion technique specifically designed to bypass traditional detection. Human-led threat hunting cannot scale to meet these demands in environments where millions of events occur every second. The 2023 MOVEit breach demonstrated how quickly legacy defenses can be overwhelmed when attackers exploit vulnerabilities at a scale that manual monitoring simply cannot track.

- AI malware mutates its identity to evade blacklists.

- Volume of data in modern enterprises exceeds human cognitive limits.

- Legacy systems lack the context to identify "low and slow" AI-driven exfiltration.

The Human-in-the-Loop Problem

The traditional security model creates a decision bottleneck. When an attack occurs, a human analyst must typically verify the alert before taking decisive action. This pause creates a window of opportunity for adversarial AI to complete its mission. To counter this, leaders must adopt a Strategic Advisory mindset, moving beyond manual oversight into the orchestration of autonomous systems. Implementing a Zero-Trust Architecture is no longer a luxury; it's a foundational requirement for the AI era. By aligning internal protocols with the NIST AI Risk Management Framework, organizations can transition from static policies to dynamic, risk-aware responses. This shift ensures that security scales with the speed of the threat. We must redefine the human role from a reactive gatekeeper to a strategic architect who masters the tools of ai and cybersecurity to maintain operational resilience.

The Glauber Framework: Building AI-Resilient Organizations

Mastering the intersection of ai and cybersecurity requires more than deploying new software; it demands a structured, actionable framework that bridges the gap between theoretical risk and operational defense. Dr. Glauber’s methodology provides a definitive four-step blueprint designed for mid-to-large organizations facing the 2024 threat environment. This isn't a static document. It's a dynamic strategy for the digital battlefield. Organizations that fail to adopt a structured approach often find themselves reactive, struggling to keep pace with automated attack vectors.

The first step involves a comprehensive AI Risk Assessment. Security teams must identify "Shadow AI," where employees use unauthorized LLMs for internal tasks. Data from a 2023 industry survey indicates that 80% of workers utilize unsanctioned AI tools, creating invisible vulnerabilities. By working with specialized consultancies like Cloud2b, organizations can transition these users to secure, AI-enhanced productivity platforms that satisfy employee needs without compromising safety. Step two focuses on Architecture Review. Organizations must integrate AI-native tools into their existing security stack, ensuring these systems communicate through a Zero-Trust Architecture. This prevents lateral movement if an AI agent is compromised. Step three establishes Governance and Policy, setting the ethical guardrails for usage. Finally, step four implements Continuous Monitoring. This utilizes persistent threat detection to neutralize adversarial AI in real-time. By 2025, analysts predict that 60% of enterprise security detections will be automated via AI-driven analytics to counter machine-speed threats.

Executing an AI Risk Assessment

Organizations must map every data flow between internal databases and third-party AI providers. This process identifies the AI Attack Surface, which expands every time a new API is integrated into the workflow. A 2023 IBM report noted that the average cost of a data breach reached $4.45 million. AI-driven breaches can escalate these costs through rapid data exfiltration and automated credential stuffing. Quantifying this impact allows CISOs to prioritize resources based on actual business risk. Success depends on identifying exactly where sensitive intellectual property meets external neural networks.

Establishing AI Governance

Effective governance starts with the C-suite. Boards need a dedicated reporting structure for AI-related risks to ensure oversight isn't buried in technical silos. Security teams should adopt Secure-by-Design principles for all internal AI development, treating model weights and training data as critical assets. Executive training is non-negotiable for modern mastery. Leadership must participate in AI Strategy Workshops to understand the tactical shifts required in the coming year. This top-down approach ensures that security isn't a hurdle but a foundational element of the corporate ai and cybersecurity strategy.

Strategic Leadership: The Role of the vCISO and AI Consultants

The integration of ai and cybersecurity is no longer a technical choice; it's a fundamental shift in corporate governance. A Virtual Chief Information Security Officer (vCISO) serves as the critical bridge between complex technical AI risks and measurable business value. They translate the intricacies of neural network vulnerabilities into the language of the balance sheet. By leveraging a vCISO, organizations gain access to elite leadership without the $230,000 average annual salary required for a full-time executive. This strategic oversight ensures that AI adoption doesn't outpace the firm's ability to defend its perimeter.

Resilience in the AI age requires constant adaptation rather than static defenses. A vCISO manages a Monthly Retainer to provide ongoing guidance as the digital battlefield evolves. This isn't a "set and forget" service. It involves continuous stress-testing of adversarial AI defenses and the refinement of Zero-Trust architectures. Regular Executive AI Strategy Workshops are central to this process. These sessions align the board of directors with security goals, ensuring that 100% of the leadership team understands the tactical necessity of cyber defense. Security has transitioned from being a cost center to a primary competitive advantage. Companies that prove their AI systems are secure earn a 20% higher trust rating from B2B clients compared to those with opaque security postures.

Why a vCISO is Critical for AI Strategy

A vCISO provides high-level expertise without the massive cost-overhead of a traditional C-suite hire. They offer an independent, objective evaluation of the 3,500+ cybersecurity vendors currently flooding the market with AI-labeled products. This prevents organizations from falling victim to "vaporware" or redundant software. Most importantly, they bridge the communication gap between the IT department and the Board of Directors. They ensure that technical metrics are presented as strategic outcomes that drive growth and mitigate liability.

Mastering the Future with Dr. Daniel Glauber

Navigating the complex intersection of AI and security requires a roadmap grounded in data. Dr. Daniel Glauber’s definitive work offers this through 50+ real-world case studies and 18 comprehensive chapters. These resources provide the actionable frameworks necessary to survive and thrive in the current threat environment. Leaders shouldn't wait for a breach to seek guidance. Engage Dr. Glauber for Keynote Speaking or Strategic Advisory to future-proof your firm against the next generation of automated threats. Mastery of AI and cybersecurity is the definitive challenge, and opportunity, of our decade.

Mastering the 2026 Digital Battlefield

The transition toward 2026 demands a total departure from legacy defenses that can't keep pace with neural network-driven attacks. Organizations must adopt the Glauber Framework to survive the ongoing arms race between adversarial and defensive technologies. This evolution isn't just about deploying software; it's about building strategic resilience through the 18 comprehensive chapters of proven methodologies. Understanding the intersection of ai and cybersecurity requires more than technical patches. It demands the 30+ years of tech innovation expertise that Dr. Daniel Glauber, author of 'Cybersecurity in the Age of Artificial Intelligence', brings to global mid-to-large organizations as a veteran vCISO. By moving from a state of potential vulnerability to one of strategic readiness, leadership teams can effectively navigate these critical domains. You've seen how 50+ real-world case studies prove that proactive defense is the only viable path forward. The tools for mastery are within reach for those ready to lead. Secure your organization's future with Dr. Daniel Glauber's Strategic AI Advisory and transform your security posture today. You're fully capable of turning these complex challenges into your organization's greatest competitive advantage.

Frequently Asked Questions

What is the primary difference between AI and traditional cybersecurity?

Traditional security relies on static, rule-based logic; AI uses dynamic neural networks to adapt to evolving threats in real time. Legacy systems follow "if-then" protocols that often miss 0-day exploits. AI processes 100 terabytes of data daily to identify anomalies that bypass signature-based detection. It's a fundamental shift from reactive defense to predictive mastery on the digital battlefield.

How can AI be used to improve threat detection and response?

AI accelerates threat detection by reducing the mean time to detect (MTTD) from 277 days to mere seconds through automated pattern recognition. By deploying machine learning algorithms, organizations can analyze millions of events per second. This speed allows for autonomous countermeasures that isolate infected endpoints before a breach spreads across the network, ensuring strategic readiness against sophisticated adversaries.

What are the biggest risks of using artificial intelligence in cybersecurity?

The primary risk involves adversarial AI, where attackers use the same technology to automate 1,000 phishing variants in minutes. Poisoning training data is another critical vulnerability that can corrupt a model's decision-making logic. If 10% of the training set is compromised, the entire security framework becomes a liability rather than a defense strategy, creating new attack vectors.

Can AI-driven cyber attacks bypass traditional firewalls and antivirus software?

Yes, AI-driven attacks bypass traditional defenses by using polymorphic code that changes its signature every 30 seconds. Legacy firewalls depend on known threat databases, which are useless against 350,000 new malware variants discovered daily in 2023. These intelligent attack vectors exploit the intersection of ai and cybersecurity to find gaps in static perimeter defenses that lack behavioral analysis capabilities.

How should a board of directors oversee AI-related cybersecurity risks?

Boards must oversee these risks by integrating AI governance into the 2024 enterprise risk management framework. They should demand quarterly audits on model transparency and third-party AI dependencies. Since 60% of board members lack technical security backgrounds, appointing a specialized advisor ensures that the strategic vision aligns with the technical reality of the digital battlefield and evolving regulatory requirements.

Will AI eventually replace human cybersecurity professionals?

AI won't replace human professionals; it'll augment their capabilities by automating 70% of routine Tier-1 SOC tasks. Human intuition remains vital for high-level strategic decision-making and ethical judgment. The goal is a hybrid model where machines handle the data-heavy lifting while experts focus on complex threat hunting and actionable frameworks that require a nuanced understanding of organizational goals.

What is an AI risk assessment, and why does my organization need one?

An AI risk assessment is a definitive audit of an organization's machine learning models and data pipelines to identify potential failure points. Organizations need this because 40% of AI implementations fail due to unmanaged security vulnerabilities. This assessment creates a roadmap for building a resilient zero-trust architecture that survives modern attack tactics while maintaining the integrity of critical data domains.

How does a Virtual CISO help an organization navigate AI security?

A Virtual CISO provides the strategic leadership needed to master the intersection of ai and cybersecurity without the overhead of a full-time executive. They implement 18-chapter security programs that address specific AI threats and regulatory compliance. This expertise ensures that mid-sized firms can deploy groundbreaking defense strategies that were once reserved for Fortune 500 companies, bridging the gap between theory and practice.