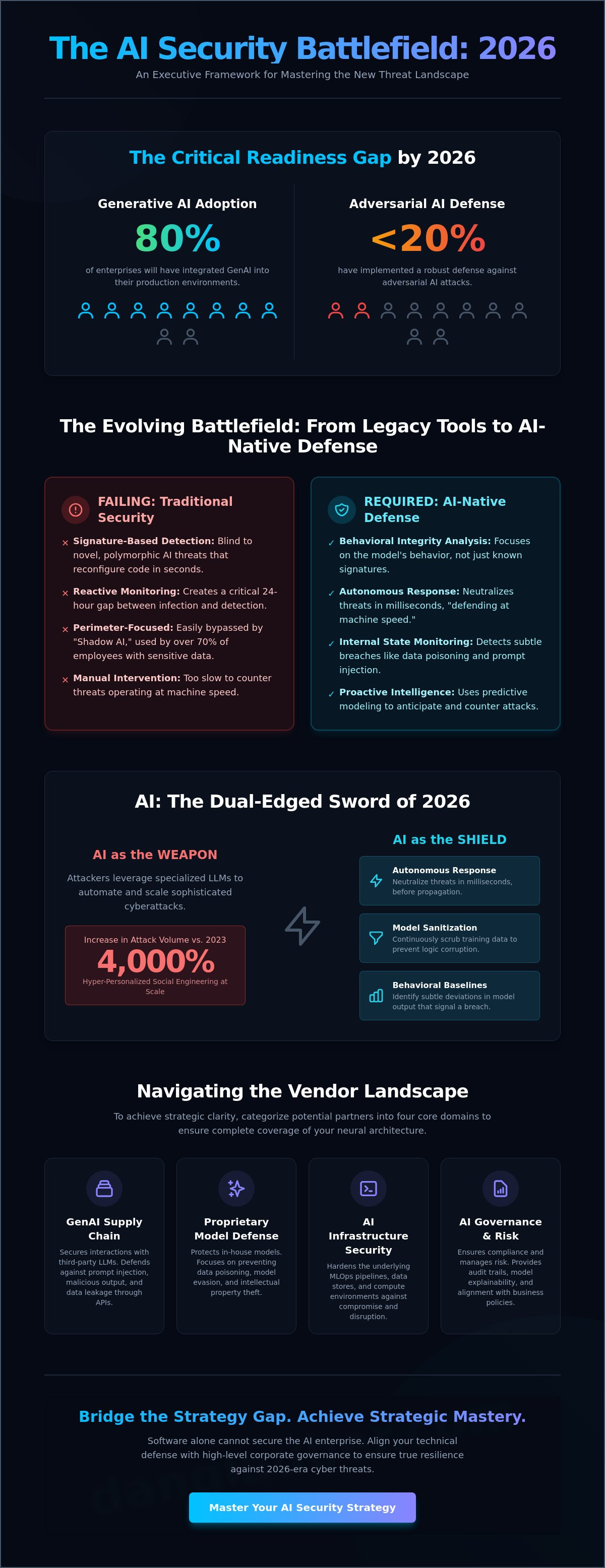

By 2026, Gartner projects that 80% of enterprises will have integrated generative AI into their production environments, yet fewer than 20% of these organizations have implemented a robust defense against adversarial AI. You've likely felt the mounting pressure from the board to deploy Large Language Models while simultaneously wrestling with the chaos of shadow AI and tool fatigue from fragmented platforms. It's a precarious position where the drive for innovation often outpaces the necessity for safety. Choosing the right ai security company is the definitive step toward reclaiming control over this digital battlefield.

This article provides you with an actionable framework to master the complex vendor landscape. You'll learn how to vet partners that offer more than just a technical patch; we're looking for mastery in both tactical countermeasures and long-term strategic governance. We'll break down the specific criteria for identifying a partner that aligns your technical defense with your core business objectives to ensure your organization remains resilient in the face of evolving cyber threats.

Key Takeaways

- Identify the critical evolution from traditional signature-based defense to the adversarial AI countermeasures required for the 2026 digital battlefield.

- Categorize the vendor landscape into four domains to ensure your chosen ai security company provides deep protection for neural networks and GenAI applications.

- Utilize a comprehensive 10-point executive checklist to vet potential partners for technical efficacy, seamless integration, and long-term scalability.

- Bridge the "Strategy Gap" by learning why software alone cannot resolve the tension between tool output and your board’s specific risk tolerance.

- Master the intersection of AI and cybersecurity through strategic guidance that aligns technical defense with high-level corporate governance.

The Evolution of the AI Security Company: Defending the 2026 Digital Battlefield

A modern ai security company is no longer a peripheral software vendor; it's a specialized architect of neural resilience. By 2026, the enterprise risk surface has moved beyond simple endpoints to the weights, biases, and data pipelines of Large Language Models (LLMs). Defending this new frontier requires a fundamental shift from signature-based detection to 2026-era adversarial AI countermeasures. While legacy systems look for known file hashes, an AI-native security entity focuses on the behavioral integrity of the model itself.

The intersection of AI and cybersecurity represents the most volatile front in modern history. Organizations are moving away from reactive monitoring, which often leaves a 24-hour gap between infection and detection, toward proactive, autonomous attack signal intelligence. This transition is critical because 2026 threats operate at speeds that exceed human cognitive limits. Mastery of this domain requires a partner that understands the fragile nature of neural networks and the sophisticated tactics used to subvert them.

Why Traditional Cybersecurity Firms Are No Longer Enough

Legacy XDR and SIEM platforms are failing against automated, polymorphic AI threats that can reconfigure their own code every 15 seconds. These traditional tools were built for a world of static rules, not the fluid reality of 2026. A major blind spot is "Shadow AI," where over 70% of employees now use unauthorized generative tools to process sensitive corporate data, bypassing traditional perimeter defenses entirely. Adversarial AI is the intentional manipulation of machine learning models to trigger system failures. This often involves Adversarial Machine Learning techniques, such as data poisoning or prompt injection, designed to force models into making catastrophic errors. Traditional firms lack the specialized frameworks to monitor these internal model states.

The Dual Role of AI: Threat Actor and Defense Strategy

The 2026 threat landscape is defined by a paradox where AI is both the weapon and the shield. Attackers now use specialized LLMs to accelerate reconnaissance and craft hyper-personalized social engineering campaigns at a 4,000% higher volume than in 2023. To counter this, an ai security company must deploy autonomous agents capable of "defending at machine speed." This requires a comprehensive cybersecurity in the age of artificial intelligence approach that integrates real-world case studies with predictive modeling. Key elements of this strategy include:

- Autonomous Response: Neutralizing threats in milliseconds before they propagate through the neural fabric.

- Model Sanitization: Continuously scrubbing training data to prevent long-term logic corruption.

- Behavioral Baselines: Identifying deviations in model output that signal a subtle breach.

Security leaders must recognize that the era of manual intervention is over. Survival in 2026 depends on strategic readiness and the adoption of frameworks that prioritize the integrity of the AI lifecycle.

Core Domains: Categorizing the AI Security Vendor Landscape

Executives face a fragmented and often chaotic market when evaluating a potential ai security company. To achieve strategic clarity, you must categorize vendors into four distinct domains. This classification prevents overlapping investments and ensures that every vulnerability in your neural architecture is addressed. Mastery of the digital battlefield starts with understanding whether a partner secures the applications your employees use or the proprietary models your engineers build.

Securing the Generative AI Supply Chain

Securing the GenAI supply chain is no longer a peripheral concern. By 2026, industry data suggests that 80% of enterprise AI failures will stem from insecure output handling or prompt injection attacks. A best-in-class ai security company provides deep observability into LLM interactions, identifying malicious intent before it reaches your core systems. These vendors must remain LLM-agnostic. This flexibility ensures your security posture doesn't crumble if you migrate from GPT-5 to a specialized open-source model. It's about maintaining control over the entire data flow, from the initial prompt to the final generated response.

Model Security and Data Integrity

Organizations developing proprietary AI must prioritize the protection of training data and model weights. This is the domain of adversarial defense. Inversion attacks, where adversaries reconstruct sensitive training data from model outputs, represent a significant threat to intellectual property. In 2026, data provenance has emerged as a critical security metric; you must know exactly where your data originated and how it was modified. This technical depth is a cornerstone of the broader ai and cybersecurity frontier, where the integrity of the model itself becomes the primary target.

Beyond model protection, the landscape includes AI-Enhanced SecOps and Strategic Governance. SecOps vendors use machine learning to automate threat detection, reducing mean time to respond (MTTR) by as much as 40% in high-volume environments. However, automation without oversight is a liability. Your chosen partners should align their operations with the NIST AI Risk Management Framework to ensure ethical compliance and rigorous risk mitigation. Governance isn't just a checklist; it's the framework that allows for rapid innovation without sacrificing enterprise safety. To build a resilient defense, leaders should explore strategic AI security consulting to align these domains with their specific business objectives.

The Executive Selection Checklist: Vetting Your AI Security Partner

Selecting an ai security company in 2026 requires more than a cursory review of marketing materials. It demands a rigorous, data-driven audit of how their models behave under duress on the modern digital battlefield. CISOs must move beyond feature lists to evaluate how a platform integrates with the existing strategic framework of the organization. The goal isn't just to buy a tool; it's to secure a definitive advantage over evolving adversarial tactics.

Technical and Operational Evaluation Criteria

Efficiency in SecOps hinges on the signal-to-noise ratio. A 2025 industry report indicated that 68% of security analysts face burnout due to redundant alerts. Your chosen ai security company must demonstrate a false-positive rate below 0.5% to ensure your team focuses on legitimate threats. Integration is the next critical pillar. The platform shouldn't operate as a silo; it must natively support Zero-Trust architectures, providing granular visibility without adding friction. Finally, measure the performance tax. If a security layer adds more than 15 milliseconds of latency to your AI applications, it will likely be bypassed by developers seeking speed over safety.

- Signal-to-Noise Benchmark: Does the vendor provide audited data on alert accuracy and noise reduction?

- Proof of Resilience: Can they demonstrate resistance to adversarial attack vectors like prompt injection and model inversion?

- Explainable AI (XAI): Does the tool provide a clear, human-readable rationale for every flagged threat?

- Zero-Trust Interoperability: Does it integrate with existing identity and access management (IAM) systems?

- Latency Thresholds: Is the operational overhead documented at less than 20 milliseconds?

- Regulatory Alignment: Does the platform comply with the EU AI Act and US Executive Order 14110?

- Data Sovereignty: Is there a contractual guarantee that your proprietary data won't be used to train the vendor's global models?

- Secure-by-Design Lifecycle: Does the vendor follow a verified secure software development lifecycle for their own AI?

- Scalability: Can the infrastructure handle a 300% increase in request volume without service degradation?

- Continuous Feedback Loops: Does the system ingest local threat intelligence to refine its detection capabilities in real-time?

Compliance, Ethics, and Data Privacy Standards

The regulatory environment has shifted from guidance to enforcement. By early 2026, non-compliance with regional AI mandates can result in fines exceeding 7% of global turnover. You've got to verify that your partner isn't just "aware" of these laws but has baked them into their code. A major red flag is any ambiguity regarding data handling. If a vendor uses your sensitive corporate data to improve their baseline models, they're creating a massive privacy leak. Demand a "Secure-by-Design" certification that proves their internal development processes are as hardened as the defenses they sell to you. Mastery of these domains ensures your organization remains both protected and compliant.

The Strategy Gap: Why Software Alone Cannot Secure the AI Enterprise

Executives often fall into the trap of believing that a high-priced license for an automated platform equates to a fortified perimeter. It doesn't. Buying an "AI security tool" is a tactical acquisition, not a defensive posture. The real challenge lies in the Strategy Gap. This is the critical disconnect between the raw data generated by a software suite and the risk tolerance threshold set by the board. An elite cyber security firm understands that technology is merely a delivery mechanism for a broader governance strategy. Without human oversight and strategic alignment, code is just noise on a screen. Your ai security company must provide more than just scripts; it must provide the logic that governs them.

Bridging Technical Risk and Business Value

Boards don't need to see every flagged anomaly on a technical dashboard. They need to understand how those anomalies impact the bottom line. A Virtual CISO (vCISO) acts as the essential translator in this environment, turning AI threat telemetry into quantitative financial risk assessments. Following the SEC's 2024 update on incident disclosure rules, leaders require Actionable Frameworks that dictate response protocols based on business continuity. This strategic advisory prevents "Security Tool Fatigue," a condition currently affecting 62% of security professionals, by optimizing existing stacks instead of adding redundant layers. A partner should focus on the intersection of AI and security to ensure every technical alert serves a business objective.

This commitment to precision in risk assessment should extend to all facets of the business; for instance, when managing physical asset incidents or fleet valuations, you can check out Kfzgutachten-Ari for expert appraisal services that ensure financial accuracy.

Building a Cyber-Resilient Culture

Human error remains the primary vector for 74% of all data breaches, yet employee training often remains a neglected compliance checkbox. In the era of adversarial AI, this vulnerability is magnified by deepfakes and sophisticated social engineering. A robust strategy mandates a Human-in-the-Loop requirement for high-stakes autonomous response systems. This prevents automated defensive actions from cascading into systemic failures. Executive workshops serve as the foundation for this implementation. These sessions ensure that the leadership team isn't just aware of the digital battlefield but is actively prepared to command it. Mastery of these critical domains involves the following pillars:

- Strategic Governance: Defining who owns AI risk across the C-suite.

- Adversarial Readiness: Simulating AI-driven attacks to test organizational response.

- Ethical Guardrails: Ensuring AI deployments don't violate privacy regulations or internal values.

Choosing an ai security company that prioritizes these cultural elements ensures that your defense is as dynamic as the threats you face. Technology is your sword, but governance is the hand that guides it.

Ready to move beyond basic tools and secure your organization's future? Master the intersection of AI and security with our strategic advisory services.

Strategic Mastery: Positioning Your Organization with Dr. Daniel Glauber

Dr. Daniel Glauber stands as the definitive guide for organizations navigating the volatile intersection of AI and security. Selecting an ai security company isn't merely a procurement decision; it's a move on a digital battlefield where the rules change weekly. Dr. Glauber bridges the gap between the boardroom and the server room, translating complex adversarial AI threats into strategic business logic. His approach ensures that security doesn't stifle innovation but rather provides the guardrails necessary for aggressive growth in 2026. By grounding abstract neural network concepts in 18 comprehensive chapters of foundational research, he offers a roadmap that moves beyond hype into high-stakes defense.

The vCISO Advantage for AI Governance

Many organizations face a critical talent shortage, as 68% of security leaders report they lack the internal expertise to secure generative AI deployments. A Virtual CISO (vCISO) provides a solution to this gap, offering elite strategic leadership without the $300,000 annual overhead of a full-time executive hire. Dr. Glauber’s vCISO model focuses on building a custom AI security roadmap that addresses your specific industry risks. This isn't a generic template. It's a "Pragmatic Visionary" framework that balances rapid innovation with rigorous defense. This methodology utilizes 50+ real-world case studies to identify potential attack vectors before they're exploited, ensuring your ai security company partnership delivers maximum ROI.

- Development of custom governance frameworks for LLM and neural network integration.

- Quarterly strategic audits to align AI initiatives with evolving global compliance standards.

- Direct advisory for internal DevSecOps teams to implement Zero-Trust architectures.

Taking the Next Step toward AI Resilience

Moving from a checklist to a state of total AI resilience requires more than passive reading. It demands a shift in organizational culture and a commitment to mastery. Dr. Glauber’s book, "Cybersecurity in the Age of Artificial Intelligence," serves as the foundational roadmap for this journey. It provides the technical depth and strategic clarity needed to outpace modern threats. For executives who need to align stakeholders quickly, board-level briefings are available to bridge the communication gap between technical teams and investors. These briefings ensure that every decision-maker understands the gravity of the current threat landscape.

Don't leave your organization's future to chance. You can schedule a strategic briefing with Dr. Daniel Glauber to transform your security posture from reactive to proactive. Whether you're seeking executive AI strategy workshops or a keynote engagement to inspire your leadership team, the path to mastery begins with expert guidance. It's time to move beyond the checklist and secure your position in the digital battlefield.

Mastering the Strategic Frontier of 2026

Navigating the complex landscape of 2026 requires more than just a software license. It demands a partnership built on actionable frameworks and deep technical mastery. Choosing the right ai security company isn't just an IT decision; it's a strategic imperative that determines your organization's resilience against adversarial AI. Success depends on bridging the strategy gap by vetting partners through a rigorous executive checklist that prioritizes both defense and innovation. You've seen how software alone fails to secure the enterprise without expert-led oversight.

Dr. Daniel Glauber offers a unique vantage point at the intersection of AI and cybersecurity. As the author of "Cybersecurity in the Age of Artificial Intelligence" and a seasoned expert with 30+ years of technology and innovation experience, he provides the foresight necessary to lead. Having served as a vCISO for global organizations, Dr. Glauber transforms abstract threats into concrete countermeasures. Don't leave your 2026 roadmap to chance. Secure your enterprise with Dr. Daniel Glauber’s Strategic Advisory Services and transform your security posture into a definitive competitive advantage. Your journey toward total strategic readiness starts today.

Frequently Asked Questions

What exactly does an AI security company do differently than a traditional one?

An AI security company focuses on protecting the integrity of machine learning models and data pipelines rather than just perimeter defense. Traditional firms secure the network; these specialists secure the logic and weights of neural networks. Gartner projects that by 2026, 40% of security breaches will involve direct attacks on AI models. This shift requires specialized countermeasures against prompt injection and model inversion that traditional firewalls can't detect.

Is AI security just for tech companies, or do mid-market firms need it too?

Mid-market firms require specialized protection because 62% of small-to-medium enterprises became targets of AI-driven phishing in 2024. Size doesn't grant immunity on the digital battlefield. These organizations often lack the internal resources to build custom neural network defenses. Partnering with a dedicated ai security company provides an actionable framework to scale protection without hiring a full 24/7 security operations center.

How do I know if an AI security tool is actually using AI or just marketing hype?

You can distinguish real AI security from marketing hype by requesting a technical breakdown of the underlying Large Language Model or neural architecture. Genuine tools provide specific documentation on their training datasets and inference latency. If a vendor can't explain their "black box" logic or lacks 3rd party validation from bodies like MITRE, it's likely just basic automation. Demand to see a demonstration of their adversarial AI testing protocols.

What is the most significant AI-related security risk for enterprises in 2026?

Automated adversarial attacks that exploit model drift and data poisoning represent the most critical threat in 2026. Research from the 2025 AI Security Index indicates that 75% of enterprises now struggle with "shadow AI" implementations. These unauthorized tools create unmonitored attack vectors into the corporate core. Without a strategy for the intersection of AI and cybersecurity, attackers can manipulate model outputs to bypass traditional authentication protocols.

Should I hire a full-time CISO or use a vCISO for AI security strategy?

A vCISO is often the superior choice for mid-sized firms, while enterprises with over 1,000 employees typically require a full-time leader. According to 2025 industry surveys, 48% of organizations now utilize fractional leadership to access high-level strategic readiness without the typical $250,000 annual salary benchmark. This model provides the technical depth needed for AI security strategy while maintaining fiscal discipline. It allows for rapid deployment of actionable frameworks.

How much should an organization budget for AI security implementation?

Organizations should allocate between 10% and 15% of their total IT budget to security, with roughly 25% of that specifically earmarked for AI-related initiatives. The 2024 SANS Institute report highlights that firms failing to invest at this level face a 3x higher risk of data exfiltration. This budget covers the ai security company fees, employee training, and continuous monitoring tools. Proper resource allocation ensures you aren't left vulnerable on the evolving digital battlefield.

Can AI security companies help with compliance for the EU AI Act?

Specialized firms provide the technical auditing and documentation required to meet the strict transparency standards of the EU AI Act. Since the regulation mandates fines up to 7% of global turnover for non-compliance, expert guidance is mandatory. These companies implement the necessary "human-in-the-loop" protocols and bias detection systems. They transform abstract regulatory requirements into a grounded, definitive defense strategy for global operations.

What happens if our AI security tool is itself compromised by an adversarial attack?

If your defense tool is compromised, your organization must immediately trigger a pre-defined Zero-Trust isolation protocol to contain the breach. This scenario, known as a "cascading model failure," occurred in 12% of simulated red-team exercises in 2025. You'll need an offline backup of your clean weights and a secondary validation layer to regain control. Mastering these recovery tactics is a core knowledge area for any resilient modern enterprise.