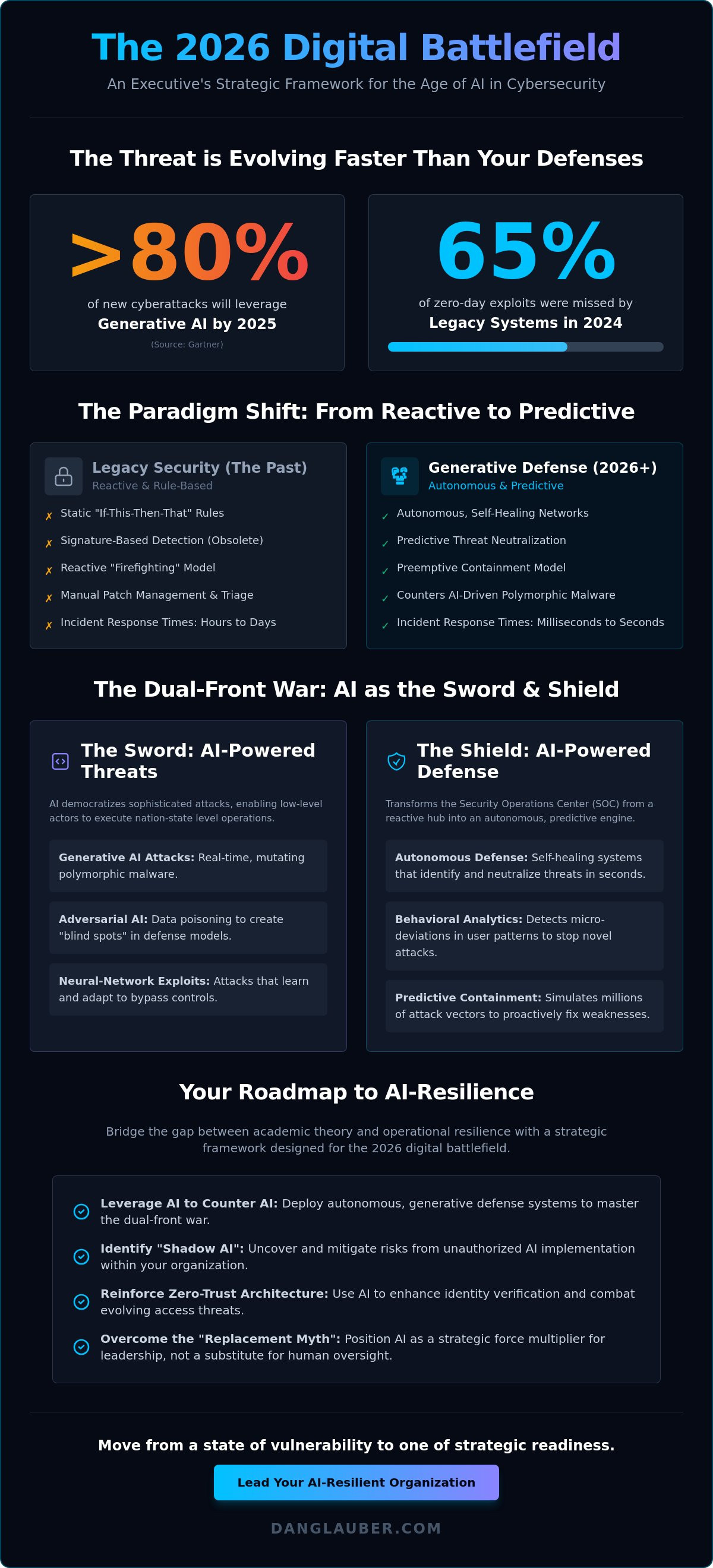

The 2026 digital battlefield won't be won with legacy firewalls or manual patch management; it'll be decided by the speed of your neural networks. By 2025, industry analysts at Gartner predict that generative AI will be leveraged in over 80% of new cyberattacks, rendering traditional defense mechanisms increasingly obsolete. You're likely exhausted by the constant noise of AI hype while facing the cold reality of adversarial ai in cybersecurity bypassing your existing controls. It's a significant challenge to translate these complex technical risks into a strategic narrative that your board of directors can actually act upon.

I've designed this guide to help you move from a state of vulnerability to one of strategic readiness. You'll gain a definitive understanding of the intersection of AI and cybersecurity through an actionable framework built for executive leadership. We'll analyze 50+ real-world case studies and 18 comprehensive knowledge areas that provide the tools for long-term resilience. This briefing outlines the exact roadmap you need to lead an AI-resilient organization with confidence and precision.

Key Takeaways

- Understand why 2026 marks the definitive transition from heuristic-based security to autonomous, generative defense systems.

- Master the dual-front war by leveraging ai in cybersecurity to counter sophisticated, democratized neural network attacks.

- Overcome the "Replacement Myth" and learn how to use AI as a strategic force multiplier for executive leadership rather than a substitute for human oversight.

- Deploy an actionable security framework that identifies hidden "Shadow AI" risks and reinforces your Zero-Trust Architecture against evolving identity threats.

- Bridge the gap between academic theory and operational resilience using a strategic roadmap designed for the 2026 digital battlefield.

The Evolution of AI in Cybersecurity: Why 2026 is a Strategic Turning Point

The year 2026 marks a definitive shift where ai in cybersecurity moves from a supportive utility to an autonomous commander. We've moved beyond the 1980s era of static heuristics where security relied on simple "if-this-then-that" rules. Today's threat actors deploy neural-network attacks that mutate in real-time, rendering traditional signature-based detection obsolete. In 2024, industry data indicated that legacy systems missed 65% of zero-day exploits; by 2026, that gap will widen to a catastrophic level without a transition to Generative Defense. This isn't just a technical upgrade. It's a fundamental restructuring of the digital battlefield. Organizations that fail to adapt face more than just data breaches; they face total operational paralysis. Understanding the broad Applications of artificial intelligence is now a prerequisite for any C-suite executive tasked with safeguarding corporate assets. The stakes involve the very survival of the enterprise.

The Paradigm Shift from Reactive to Predictive

Machine learning models now process telemetry data at petabyte scales to identify subtle anomalies before an exploit is even attempted. This transition allows security teams to move away from "firefighting" toward a model of preemptive containment. Large Language Models (LLMs) have reduced incident response timelines from hours to seconds by synthesizing complex log data into actionable intelligence for SOC analysts. Predictive Defense is the core of 2026 security strategy, defined as the proactive neutralization of threats through continuous algorithmic foresight. By utilizing these models, organizations can simulate millions of attack vectors simultaneously, identifying weak points in the perimeter before an adversary can find them.

Bridging the Gap Between Technical Complexity and Business Risk

Cybersecurity is no longer a localized IT concern; it's a primary driver of financial and operational resilience. Executives must view AI as a strategic asset that protects the balance sheet. Integrating these insights into the Cybersecurity in the Age of Artificial Intelligence: A Strategic Framework for 2026 mindset allows for a unified defense posture. The importance of actionable frameworks cannot be overstated for non-technical stakeholders who need to translate technical "noise" into clear risk-mitigation steps. This approach ensures that ai in cybersecurity serves as a shield for the bottom line. It transforms abstract technical concepts into a language of mastery and strategic readiness that leadership can act upon with confidence.

- Autonomous Systems: Moving beyond scripts to self-healing networks.

- Neural-Network Attacks: Countering AI-driven polymorphic malware.

- Financial Resilience: Viewing security as a core business continuity factor.

The Dual-Perspective: AI as Both the Shield and the Sword

The intersection of AI and cybersecurity represents a paradigm shift where the speed of light is the only velocity that matters. By 2026, the traditional perimeter is obsolete; in its place stands a dynamic, fluctuating frontier. This isn't a static defense but a dual-front war where neural networks serve as both the primary weapon and the ultimate protector. Security leaders must recognize that ai in cybersecurity democratizes sophisticated attacks, allowing low-level actors to execute nation-state level operations with minimal overhead.

The emergence of adversarial AI introduces a critical vulnerability: data poisoning. Adversaries now target the very models we rely on for protection. By injecting malicious data into training sets, attackers can create "blind spots" in security software. Organizations should align their defense strategies with the CISA AI Security Framework to ensure their models remain resilient against these sophisticated manipulation tactics. This strategic alignment is vital for maintaining a posture of mastery in the 2026 digital battlefield.

The Shield: AI-Driven Defense Strategies

Defensive AI transforms the Security Operations Center (SOC) from a reactive hub into an autonomous engine. Behavioral analytics now identify "living off the land" attacks by detecting micro-deviations in user patterns that human analysts would miss. Machine learning models correlate trillions of data points to trigger autonomous patching and vulnerability management at machine speed, closing windows of exposure in milliseconds. For a deeper analysis of these mechanisms, explore AI and Cybersecurity: Navigating the Strategic Frontier in 2026 to master these critical domains.

The Sword: The Rise of Machine-Speed Attacks

Adversaries utilize generative models to break traditional security barriers. AI-generated phishing has eliminated the "broken English" red flags of the past; perfect syntax and localized context are now the baseline. Deepfake-as-a-Service platforms pose a direct threat to executive identity, with synthetic audio and video capable of bypassing biometric financial authorizations. Automated malware mutation is perhaps the most lethal development. AI now creates unique, polymorphic signatures for every individual target, rendering traditional signature-based detection 100% ineffective against 2026-era threats.

To stay ahead of these evolving vectors, security professionals need actionable frameworks that bridge the gap between technical theory and operational reality.

Moving Beyond the Hype: Addressing the #1 Objection to AI Automation

Fear of professional obsolescence often stalls critical innovation. In the 2026 digital battlefield, the Chief Information Security Officer (CISO) isn't replaced by a script; they're augmented by a force multiplier. While algorithms process 10,000 telemetry signals per second, the human strategist remains the ultimate arbiter of risk. We're witnessing a shift from tactical firefighting to strategic command. AI manages the exhaustion of repetitive triage, leaving the high-level defensive posture to the human expert. Mastery of ai in cybersecurity requires accepting that machines provide the speed, but humans provide the intent.

The Explainability Crisis in AI Security

Security teams frequently resist automated systems because they operate as a "black box." If an autonomous agent blocks a mission-critical B2B transaction during peak hours, the board demands a justification beyond "the model said so." Automated blocks without a clear "why" create organizational friction and erode trust between IT and the C-suite. To solve this, we implement frameworks like SHAP (SHapley Additive exPlanations) to audit decisions and ensure compliance with evolving data privacy laws. Understanding The Dual-Perspective on AI is essential for leaders who must defend against adversarial attacks while maintaining transparent internal controls. The vCISO acts as the vital translator here, converting complex neural network outputs into actionable business logic that stakeholders can verify.

Justifying AI ROI to Stakeholders

Boards of directors prioritize fiscal resilience over technical specifications. To justify the investment, leaders must quantify the reduction in Time to Detection (TTD) and Time to Remediation (TTR). According to the 2023 Cost of a Data Breach Report, organizations using extensive security AI and automation saved an average of $1.76 million compared to those that didn't. This isn't just a marginal gain; it's a fundamental shift in the cost of defense. For a detailed breakdown of reporting these metrics, see our guide on Cyber Security Firms: A Strategic Guide for Board-Level Risk Management in 2026. Effectively, ai in cybersecurity reduces the "cybersecurity talent gap" tax by allowing a lean team of three analysts to perform the workload of a dozen through automated correlation and response.

The Critical Role of Human-in-the-Loop (HITL)

High-stakes security interventions cannot be left entirely to unmonitored silicon. Human-in-the-loop (HITL) protocols ensure that while AI operates at wire speed to contain a threat, the final decision for scorched-earth responses—such as shutting down a regional data center—remains with a human officer. This partnership balances machine efficiency with human ethics and organizational context. It's a disciplined approach to defense. We use AI to sift through the noise, but we rely on human intuition to navigate the "gray zone" of sophisticated social engineering and complex supply chain attacks.

Implementing an Actionable AI Security Framework: From Boardroom to SOC

- Step 1: Conduct an AI Risk Assessment. Identify "Shadow AI" within the enterprise. A 2024 Salesforce report revealed that 28% of workers use unapproved AI tools. You can't secure what you can't see; mapping these unauthorized deployments is the first priority.

- Step 2: Establish a Zero-Trust Architecture. Shift security assumptions to assume that every identity is potentially compromised by AI-driven social engineering. Trust nothing; verify everything.

- Step 3: Evaluate Third-Party AI Vendors. Use a "Trust-but-Verify" checklist. Demand transparency regarding model training data and residency to avoid supply chain contamination.

- Step 4: Develop a Cyber-Resilient Culture. Move security from a back-office cost center to a boardroom priority. Use workshops to align executive strategy with technical reality.

- Step 5: Continuous Monitoring and Iteration. The pace of innovation means your framework is obsolete within six months without constant updates. Implement automated red-teaming to test defenses against evolving adversarial AI.

The Executive AI Strategy Workshop

Leadership often views AI as a productivity tool rather than a strategic vulnerability. Training the C-suite on the nuances of ai in cybersecurity is the most critical defense layer an organization can build. Executives must master domains ranging from data privacy to adversarial resilience to make informed budget decisions. Engaging an ai cybersecurity consultant helps facilitate this strategic alignment. These experts bridge the gap between technical risk and business continuity; ensuring the organization is prepared for the 2026 threat landscape.

Zero-Trust in the Age of AI

Identity is the new perimeter. In an era where 90% of online content may be synthetically generated by 2026, traditional passwords and even basic biometrics are insufficient. Deepfakes make voice and video verification unreliable for high-stakes transactions. Implementing multi-modal authentication that requires physical hardware keys or complex behavioral biometrics is now essential. Many organizations utilize virtual ciso consulting services to design these architectures. This approach provides the high-level expertise needed to combat AI-driven identity compromise without the overhead of a full-time executive hire.

Mastering the Intersection: Strategic Advisory for the Age of AI

Dr. Daniel Glauber operates at the precise point where academic rigor meets tactical execution. He doesn't just discuss the potential of ai in cybersecurity; he builds the frameworks that allow leaders to master it. Organizations frequently struggle to turn high-level AI concepts into defensive operations. Dr. Glauber solves this by providing a structured path from vulnerability to strategic command, ensuring that your defense is as sophisticated as the threats it faces.

Strategic Advisory and vCISO Leadership

Mid-to-large organizations require consistent, expert guidance to navigate the complexities of the 2026 digital battlefield. A monthly vCISO retainer offers a high-impact solution for teams that need executive-level security leadership without the overhead of a full-time hire. This partnership ensures that your security posture evolves as quickly as the tools used by adversaries. The effective application of ai in cybersecurity requires a steady hand at the helm to manage both technical and cultural shifts.

- Customized Security Roadmaps: Every technological environment is unique, requiring tailored strategies that address specific vulnerabilities and business goals rather than generic templates.

- Board-Level Briefings: Dr. Glauber translates technical AI risks into financial and operational metrics that resonate with stakeholders and decision-makers, facilitating faster budget approvals.

- Continuous Strategic Evolution: As new neural network attack vectors emerge, the vCISO provides the necessary pivots to maintain a robust defense throughout the year.

Next Steps: Securing Your Organizational Future

The journey toward mastery begins with foundational knowledge. The book, "Cybersecurity in the Age of Artificial Intelligence," serves as the definitive guide for this transition. Across 18 comprehensive chapters, it provides the actionable frameworks needed to secure modern enterprises. It includes 50+ real-world case studies that ground complex theories in proven results, making it an essential resource for any serious security professional looking to stay ahead of the curve.

Beyond the written word, Dr. Glauber offers keynote speaking engagements designed to catalyze organizational change. These sessions move beyond the hype, providing audiences with a clear vision of the future and the steps required to reach it. Engaging with these resources is the first step toward moving your organization from a state of uncertainty to one of strategic readiness. In the age of AI, preparedness is the only true defense.

Mastering the 2026 Digital Battlefield

The transition toward the 2026 strategic turning point demands more than just awareness; it requires a total realignment of your defensive posture. We've explored how ai in cybersecurity acts as both a sophisticated shield and a relentless sword, necessitating a move beyond automation hype toward actionable frameworks that empower the SOC. Organizations must bridge the gap between boardroom theory and technical application to survive the next generation of adversarial attacks. Success in this era isn't accidental. It's built on a foundation of proven expertise and data-driven tactics.

Dr. Daniel Glauber brings 30+ years of technology and innovation experience to the frontlines of digital defense. As the author of 'Cybersecurity in the Age of Artificial Intelligence' and a strategist who has developed 50+ real-world case studies, he provides the clarity needed to navigate complex neural networks and zero-trust architectures. You don't have to face these revolutionizing threats alone. The digital landscape is shifting rapidly, but with the right strategic framework, your team can turn these emerging challenges into a decisive competitive advantage.

Secure your organization’s future with Dr. Daniel Glauber’s strategic advisory services.

Frequently Asked Questions

How is AI currently being used in cybersecurity attacks?

AI accelerates the reconnaissance and execution phases of the kill chain by automating vulnerability scanning and malware mutation. Threat actors use Large Language Models to craft hyper-personalized phishing lures that bypass traditional secure email gateways. According to the 2024 IBM Cost of a Data Breach Report, the integration of ai in cybersecurity attacks has reduced the time to exploit known vulnerabilities by 30 percent. This creates a high-velocity environment where static defenses fail.

Will AI eventually replace human cybersecurity professionals?

AI won't replace human cybersecurity professionals; it'll augment their capacity to manage the 2026 digital battlefield. While algorithms handle the 85 percent of Tier 1 SOC alerts that are repetitive, humans remain essential for high-level strategic decision-making and incident response. The goal is a hybrid model of human-machine collaboration. Mastery of ai in cybersecurity tools is the new baseline for career longevity in the Age of Artificial Intelligence.

What are the biggest risks of using AI for cyber defense?

The primary risks involve data poisoning and the creation of "black box" logic that obscures why a specific security decision was made. If an attacker injects malicious data into your training set, the model's accuracy can drop by 25 percent according to NIST research. This leads to false negatives where critical threats are ignored. Organizations must implement rigorous validation frameworks to ensure their defensive models remain resilient against such manipulation.

How can a small business afford AI-driven cybersecurity tools?

Small businesses can access AI-driven security through Managed Service Providers (MSPs) that offer "Security as a Service" models. This approach aggregates the high costs of neural networks across thousands of clients, making enterprise-grade protection accessible for a fixed monthly fee. A 2023 survey by Canalys found that 60 percent of small firms now utilize AI via cloud-based platforms. It's a cost-effective way to deploy sophisticated countermeasures without a massive capital investment.

What is adversarial AI and why should my board be worried?

Adversarial AI involves attackers using machine learning to deceive or manipulate your own security models. Boards should be concerned because these tactics can bypass 90 percent of traditional signature-based detection systems. If an adversary successfully fools your AI, they gain persistent access without triggering an alarm. It's a fundamental shift in the threat landscape that requires a strategic transition toward Zero-Trust Architecture and robust model monitoring.

What are the best practices for implementing AI in an existing security stack?

Successful implementation begins with a data-first approach and a clear mapping of your organization's attack vectors. You shouldn't deploy AI as a standalone solution; it must be integrated into a Zero-Trust framework to provide layered defense. Start with a pilot program targeting a specific domain like identity management. This allows your team to achieve mastery over the tool's nuances before scaling across the entire enterprise security stack.

Can AI help in detecting zero-day vulnerabilities?

AI detects zero-day vulnerabilities by identifying behavioral anomalies that deviate from established network baselines. Unlike traditional systems that rely on known signatures, neural networks analyze traffic patterns to spot the 0.1 percent of activity that signals a novel exploit. Research from Mandiant indicates that AI-enabled systems identify unknown threats 40 percent faster than manual analysis. This rapid detection is critical for neutralizing threats before they can move laterally through your network.

How does generative AI change the landscape of social engineering?

Generative AI has eliminated the linguistic errors and tells that previously identified 75 percent of phishing attempts. Attackers use these tools to generate deepfake audio and perfectly written emails that mimic the tone of specific executives. This creates a crisis of trust within the digital battlefield. Organizations must respond by implementing stricter multi-factor authentication and training employees to verify any sensitive request through out-of-band communication channels.